10 Assessment and Rubric Development

How can AI tools assist with assessment and rubric development?

This chapter explores how AI tools can:

- assist with creating assessment questions based on supplied prompts or materials;

- generate project topics and assessment scenarios;

- help to develop rubrics.

Developing Assessments

AI tools can help to develop assessments that are closely connected to learning outcomes, performance standards, and industry needs. Prior to using AI tools to help with any assessment development, ensure that institutional and departmental policy allows it. As well, instructors should consider consulting with educational developers and instructional designers at their institution to ensure that assessments are in line with institutional policies and practices.

Creating Questions

The Using AI to Enhance Student Learning chapter explores in detail how students can use AI tools to generate practice questions. In the same way, instructors can use AI tools to generate potential questions for assessments. Prompting AI with information about the course, program, level, and topics covered can yield a list of questions that may be reviewed and adapted for assessment. Another approach is to share a previous set of exam questions with an AI tool, and ask it to produce a new set of questions in order to vary assessments from term to term. Instructors should consult institutional and departmental policies before uploading internal information to an AI tool, and should consider uploading with data collection turned off.

AI tools can also be prompted to generate questions based on a supplied text. RRC Polytech aerospace manufacturing technician instructor Chris Marek has engaged AI tools to create a bank of assessment questions by submitting a passage from an aviation manual and information about the course level and types of questions needed. Marek reported that questions produced were largely appropriate to the context and just needed reviewing and refining.[1]

Instructors should consult copyright laws before uploading any protected content to an AI tool. Read more about AI tools and copyright considerations.

Alternate Assessment Formats

For instructors looking to diversify their assessment approach, AI tools can generate ideas for alternate assessment types or formats. As input, AI tools can accept an existing assessment or a course description and set of learning outcomes (if allowed by institutional and departmental policies). AI tools can also be prompted to produce assessment ideas related to a particular pedagogical approach, like problem-based or inquiry-based learning.[2]

Scenarios, Case Studies, and Project Topics

AI tools can be used to create detailed and varied scenario-based assessments, and to present them in engaging and novel ways, such as a detailed letter from a client requesting services.[3] Numerous RRC Polytech instructors have employed AI tools to generate a pool of possible group project topics on a given course and theme.[4] In addition to basics about the course, level, and topic, sharing examples of previous project topics can lead to better output from an AI tool. Here is an example of a RRC Polytech School of Indigenous Education instructor prompting an AI tool for potential project topics. Click the image to view the full conversation.

Even with a detailed prompt, AI tools will likely suggest some project topics that could work well, and others that miss the mark. One idea for reprompting is to evaluate the first set of project topics generated by an AI tool, let it know which ones are most useful and why, and ask for more that are similar.

Developing Rubrics

Creating rubrics is a time-consuming task for instructors and course developers. AI tools—both all-purpose tools and specialized ones like ClickUp—are well-suited to assist in the process of rubric development. If a set of rubric criteria are already established, an AI tool can be prompted with a course description, task description, and the list of criteria and levels of performance, and can produce a first draft of performance descriptions, which is often the most labour-intensive part of building a rubric.

Another approach is to supply an AI tool with a description of the course, the task and the learning outcomes that need to be addressed (if allowed by institutional and departmental policy), and the desired number of criteria and levels of performance. The AI tool can then be prompted to make suggestions for both criteria and descriptions. After AI tools have been reprompted for bulk editing, the rubric can be pasted into a Word document for further refining, or exported in a variety of file formats.

In the Winter 2024 term, one course in RRC Polytech’s Teaching for Learning in Applied Education program, a three-year program taken by instructors transitioning from industry to teaching roles, began allowing students to use AI tools to assist in a rubric-development assignment.[5] Incorporating AI into rubric-creation assignments for new instructors ensures that they get an opportunity to experiment with AI tools and receive expert feedback on the suitability of their AI-assisted rubric.

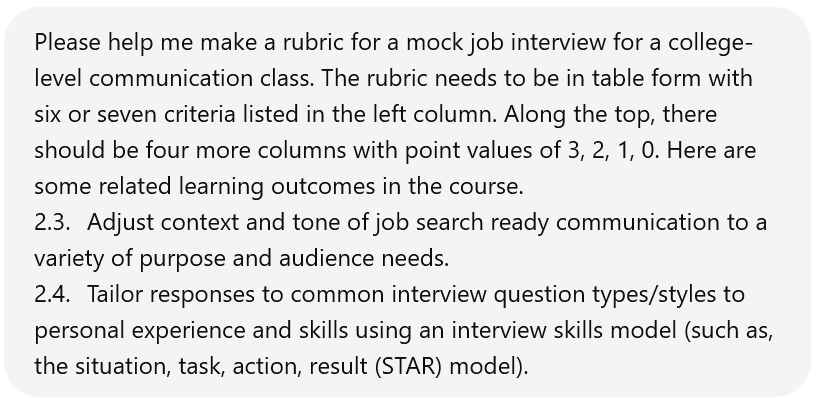

Here is an example of a prompt used to generate a rubric. Click the image to read the full conversation. By the end of the conversation, the rubric is still not ready for student use, but is at the point where manual refining will be more efficient than further reprompting.

Below is ChatGPT’s first attempt at a rubric, based on the supplied prompt. Click on the hotspots to view more information about some potential areas for reprompting and refinement. An accessible version of this content is also available.

Some issues with this rubric are well-suited to reprompting, such as asking ChatGPT to eliminate negative language in the rubric. Other issues may be more nuanced and may be quicker to refine manually, such as changing the adjectives in the header row.

For those who plan to develop multiple rubrics, adding some guidance to your custom instructions may be a time saver. An example custom instruction is: Every time I ask you to create a rubric, it should have four performance levels listed in the top row. Those performance levels should read (from left to right): Developed (3 points), Developing (2 points), Beginning (1 point), Not yet (0 points). Please avoid negative language in rubric descriptions. My preferred adjective for the Developed category is consistently.

Key Takeaways

- AI tools can assist with producing assessment questions, topics, and scenarios.

- AI tools can produce detailed rubrics.

Exercises

- Try using an AI tool to generate a series of practice or test questions on a topic related to your area of instruction. Considering the level and content of the questions, what kind of reprompting or editing is needed to make them suitable for use?

- Ask an AI tool to produce a list of suggested project topics or case studies. Reflect on the appropriateness of the output, and consider what strategies could be employed to improve it, including refining prompts or providing an example.

- Experiment with using an AI tool to generate a rubric. Considering the reprompting and editing needed, reflect on whether the AI-assisted rubric surpasses traditional methods in terms of quality and production efficiency.

Rubric Image Description

The table below shows ChatGPT’s first attempt at generating a rubric for a mock job interview assignment. Following the rubric is a list of bullet points that outline some potential changes that instructional staff might like to make to the rubric before using it in their course.

| Criteria | 3 Points (Excellent) | 2 Points (Good) | 1 Point (Fair) | 0 Points (Poor) |

| Understanding of the Position | Demonstrates an excellent understanding of the job requirements and company. | Shows a good understanding but may miss some details. | Shows a basic understanding, with several important aspects missing. | Shows no understanding of the job or company. |

| Communication Skills | Communicates ideas clearly and confidently, maintaining professionalism. | Communicates well but may lack clarity or confidence in some responses. | Communication is somewhat clear but lacks confidence and professionalism. | Poor communication skills; lacks clarity and professionalism. |

| Response to Common Interview Questions | Provides detailed, relevant responses using the STAR model effectively. | Responses are relevant but may not fully utilize the STAR model. | Responses lack detail or clear structure; limited use of STAR model. | Fails to provide relevant or structured responses; does not use STAR model. |

| Context and Tone Adjustment | Excellently adjusts context and tone to suit different questions and scenarios. | Generally adjusts context and tone appropriately but with minor inconsistencies. | Struggles to adjust context and tone, leading to inappropriate responses in some cases. | Unable to adjust context and tone; responses are consistently inappropriate. |

| Personal Experience and Skills Integration | Integrates personal experience and skills seamlessly into responses. | Integrates personal experience and skills but with some lack of relevance or clarity. | Attempts to integrate experience and skills, but it’s poorly executed or irrelevant. | Does not integrate personal experience or skills into responses. |

| Overall Impression and Professionalism | Creates an excellent overall impression; highly professional demeanor. | Good overall impression; generally professional with minor lapses. | Fair overall impression; shows a lack of professionalism in several aspects. | Poor overall impression; unprofessional demeanor throughout. |

Some Potential Issues with This Rubric

- In the first row, instructors may wish to use alternate adjectives that are more descriptive, avoid negative language, and are consistent with other course or department rubrics. Many rubrics list the adjectives first, with point values in parentheses.

- The Communication Skills criterion may be too broad, considering the rubric is for a communication course. Rubric developers may wish to get more specific or split it into multiple criteria.

- The Response to Common Interview Questions row mentions using the STAR model, but the associated learning outcome requires students to use a model such as STAR. Rubric descriptions need to indicate that STAR is not the only acceptable response model.

- The Context and Tone Adjustment criterion may be confusing. Students in this course will be aware of what it means to adjust tone but may not understand what it means to adjust context. Another word choice could increase clarity, like “adjusts tone to the context and to suit different questions and scenarios.”

- The rubric contains some negative language, like “it’s poorly executed or irrelevant.” Rubric developers might wish to choose more positive language, even when pointing out weaknesses in student performance.

- Unless a holistic category is desired, the Overall Impression and Professionalism criterion could be more specific. Since tone is already covered, as well as professionalism and the student’s communication skills, perhaps this category could be about non-verbal communication like body language, gestures, facial expressions, and eye contact.

Return to the Developing Rubrics Section

- Chris Marek, focus group, November 6, 2023. ↵

- Paul R. MacPherson Institute for Leadership, Innovation and Excellence in Teaching, "Generative Artificial Intelligence in Teaching and Learning at McMaster University," McMaster University, last modified 2023, https://ecampusontario.pressbooks.pub/mcmasterteachgenerativeai/. ↵

- Chrissi Nerantzi, Sandra Abegglen, Marianna Karatsiori, and Antonio Martínez-Arboleda, "101 Creative Ideas to Use AI in Education, A Crowdsourced Collection," Zenodo, last modified 2023, https://doi.org/10.5281/zenodo.8072950. ↵

- Jacob Carewick, focus group, November 6, 2023; Anonymous focus group participants, November 2023. ↵

- Anonymous focus group participant, November 2023. ↵

A feature that users can toggle on some AI tools. When data collection is turned off, the tool will not use inputs to improve its models. To toggle this setting in ChatGPT, click on the user name in the bottom right corner, then Settings, Data Controls, Chat History & Training.

A set of instructions that a user establishes with an AI tool, which apply to all of their interactions. Custom instructions can be set in ChatGPT by clicking on the user's name near the bottom left corner, then on Custom Instructions.